How to Make Your Website AI-Agent Friendly: What Cloudflare's Research Actually Means for You

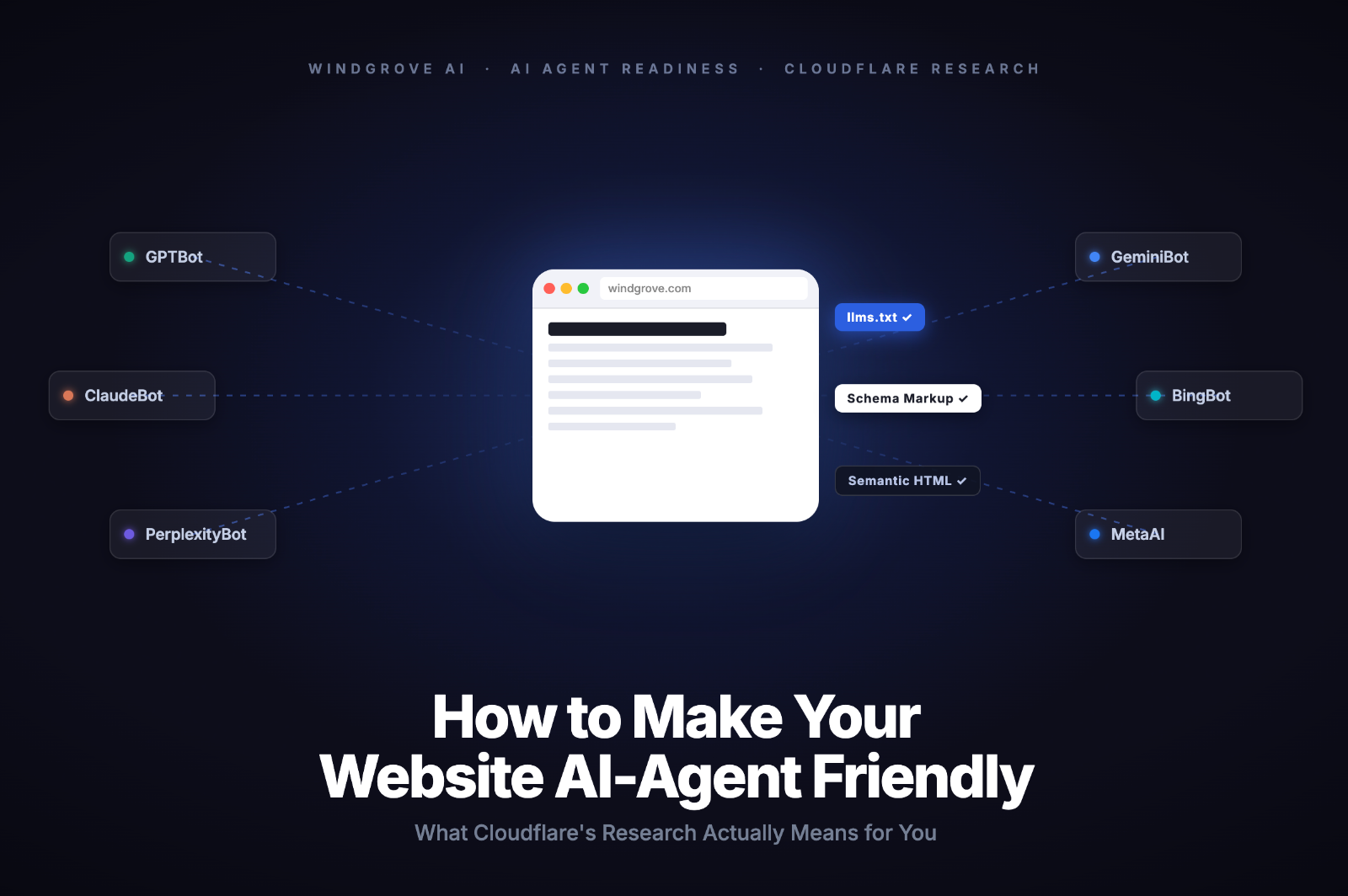

During Cloudflare's Agent Week in April 2026, their engineering team scanned the top 500,000 domains on the web and asked a simple question: are these sites ready for AI agents? The answer was blunt. Most are not. And the coverage that followed mostly missed the point, burying smaller site owners under a list of protocols they will never need.

This article cuts through that. If you run a content site, a blog, or a simple marketing website, here is what actually matters and what you can safely ignore.

Executive Summary

- AI agents are already visiting your website, but 80% of what they receive is noise: CSS, JavaScript, navigation markup, and boilerplate your content never asked for.

- Cloudflare's research found that only 4% of sites declare AI usage preferences and just 3.9% support markdown delivery, the two changes with the clearest impact for content sites.

- Most agent-readiness guides treat every website the same. They should not. Content sites need three things: better discoverability, cleaner content delivery, and deliberate crawl governance.

- Implementing agent-readiness features without understanding the SEO implications is how you gain AI visibility and lose Google rankings at the same time.

Why Agent Readiness Is No Longer Optional

When an AI agent visits your site, it does not see what you see. It sends an HTTP request, receives a response, and tries to extract meaningful content from whatever comes back. On a typical modern website, that response is mostly structural debris: navigation menus, JavaScript bundles, cookie consent banners, footer links, and CSS that exists purely to make things look good for humans.

Cloudflare's Agent Readiness research measured this directly. Their finding: roughly 80% of a standard HTML response is irrelevant to an AI agent. The agent only wants the actual content. Everything else is token waste, and tokens cost money and time on the agent's side.

This is not a niche problem. By early 2026, AI training crawlers alone accounted for nearly 50% of total web traffic. Coding assistants like Claude Code and OpenCode already send Accept: text/markdown headers with their requests, signalling that they prefer a cleaner format. Your site either responds usefully or it does not.

What it means for you: Your website has an AI audience right now, whether you have optimised for it or not. The question is not whether to act. It is which actions are worth your time.

Not Every Standard Applies to Your Site

The most important thing Cloudflare's research confirmed is something the broader coverage glossed over: different site types need different standards. A content site and an API-first product have almost nothing in common from an agent's perspective.

For a blog, publisher site, or marketing website, an agent has one job: find your pages, understand what they cover, and extract the content efficiently. There is no API to authenticate against, no MCP server to discover, no commerce layer to negotiate with.

The table below maps which standards actually apply to each site type.

Standard | Content Site | API-Heavy Product |

|---|---|---|

robots.txt + AI usage signals | Yes, high priority | Yes, high priority |

sitemap.xml | Yes, high priority | Yes, high priority |

llms.txt | Yes, useful | Useful for docs |

Markdown content negotiation | Yes, if stack supports it | Yes, if stack supports it |

API Catalog (RFC 9727) | No | Yes |

OAuth / auth discovery | No | Yes |

MCP Server Card | No | Yes, if you have an MCP server |

Commerce / payment protocols | No | Only if you sell access programmatically |

A content site that scores poorly on API Catalog and MCP checks is not failing. It is being evaluated by the wrong criteria.

What it means for you: If you run a content site, you can ignore the bottom four rows of that table entirely. That eliminates roughly half the "agent readiness" checklist most guides push on you.

The Three Changes That Actually Move the Needle

Cloudflare's scan found that 78% of sites already have a robots.txt file. But the vast majority were written for traditional search crawlers, not AI agents. Only 4% declare any AI usage preferences. Just 3.9% support markdown content negotiation. The gap between "technically has a robots.txt" and "is actually agent ready" is where most smaller sites are sitting.

Here is where to focus first.

1. Fix your discoverability layer

Before an agent can read your content, it has to find it. Three things govern this.

- robots.txt audit: Many sites added blanket AI-blocking rules during the training data concerns of 2023 and 2024 and never revisited them. If you want AI search visibility, check whether you are accidentally blocking GPTBot, ClaudeBot, or PerplexityBot. Blanket blocks hurt AI citation potential without stopping the crawlers you actually want to keep out.

- sitemap.xml health: Agents use sitemaps the same way Google does: as a shortcut to find your pages without parsing every link in your HTML. Make sure yours is current, submitted to Google Search Console, and includes your highest-value content.

- Content signals: A newer robots.txt extension lets you declare AI usage preferences at a granular level. You can allow AI search while restricting AI training. Only 4% of sites use this today, which means early adopters have a clear first-mover window.

2. Make your content easier for agents to consume

Even when agents find your pages, Cloudflare's markdown research shows they receive up to 80% token waste in a standard HTML response. Markdown delivery eliminates most of that. Fewer tokens means faster processing, lower cost on the agent's side, and a higher chance your content gets read in full rather than truncated by context window limits.

Your two options:

- llms.txt: A plain-text file at your site root that gives agents a structured reading list of your most important pages. Think of it as a sitemap written for an LLM. Low effort, no engineering required. Start here if you have limited dev resources.

- Markdown content negotiation: Agents send an

Accept: text/markdownheader and your server (or CDN) converts HTML to markdown on the fly. More powerful, automatically stays current, but requires platform support or a developer to set up.

3. Add structured data to your key pages

AI agents and crawlers use schema markup to understand what a page is about without inferring it from prose. For content sites, the highest-value schema types are Article, FAQPage, HowTo, and Organization. Beyond schema, semantic heading structure matters: a page with a clear H1, logical H2 sections, and concise opening paragraphs is dramatically easier for an agent to extract and cite accurately.

What it means for you: These three changes improve both SEO and agent readiness simultaneously. None of them require you to build anything new. They work with your existing content and architecture.

Where Agent Readiness Can Hurt SEO If You Do It Badly

This is the section most guides skip entirely. And it is the one that costs teams the most.

Agent readiness is an early-stage practice. Some of the standards being discussed today are less than a year old. Some will not survive. In the rush to implement everything, teams are making changes that improve their agent readiness score while quietly damaging their search performance. One participant at Cloudflare's Agent Week put it plainly: sites implementing agentic features have seen measurable SEO penalties, gaining AI traffic while losing Google rankings.

The most common failure modes:

- Unblocking AI crawlers without thinking it through. If you added blanket blocks in 2023, reversing them carelessly can expose content you never intended to share. Be surgical, not sweeping.

- Creating new public URLs without indexation controls. Some implementations generate separate markdown endpoints at new URLs. If those are publicly accessible and not excluded from your sitemap, search engines may index them as thin or duplicate content.

- Serving different content to agents vs humans. Markdown content negotiation responds to a format request, not a user-agent check, so it is technically not cloaking. But if your markdown version strips sections, changes headings, or omits context that exists in the HTML version, you have a content equivalence problem. Both search engines and AI systems penalise inconsistency.

- Burning crawl budget on unnecessary infrastructure. New files, new endpoints, and new discovery mechanisms all cost crawl budget. For smaller sites with limited crawl allocation, this can slow down how quickly Google discovers your actual content.

What it means for you: Implement one change at a time. Check your search performance after each one. The goal is a site that works for both audiences, not one that gains AI visibility at the expense of the rankings you already have.

What to Implement Now, Later, or Never

Use this framework to prioritise based on your site type and available resources. Every row has been filtered for content sites specifically.

Implement Now | Implement If Relevant | Ignore For Now |

|---|---|---|

robots.txt audit (AI crawler rules) | llms.txt (if you publish infrequently) | API Catalog (RFC 9727) |

sitemap.xml health check | Markdown content negotiation (if your platform supports it) | OAuth / auth discovery |

Semantic heading structure (H1, H2, H3) | Content signals in robots.txt | MCP Server Card |

JSON-LD schema (Article, FAQ, HowTo, Org) | Link headers for key resources | Web Bot Auth / signed bot identity |

Answer-first content formatting | llms-full.txt (for large content archives) | Commerce / payment protocols (X.402) |

Implement Now applies to virtually every content site regardless of size. These changes improve both SEO and agent readiness simultaneously, carry no meaningful risk, and require no new infrastructure.

Implement If Relevant depends on your publishing cadence, hosting platform, and engineering capacity. If you publish weekly, llms.txt will go stale fast. If your CDN supports markdown delivery with a toggle, turn it on.

Ignore For Now is not a permanent verdict. These standards matter for API products, developer tools, and transactional platforms. But if you run a content site, none of them apply to your architecture today.

What it means for you: The "Implement Now" column is your entire to-do list for the next 30 days. Everything else can wait until the standards mature.

Audit Your Site in 30 Minutes

The fastest baseline is Cloudflare's Is It Agent Ready tool. Enter your URL, select your site type, and it runs 16 checks across discoverability, content, bot access control, and capabilities in about 30 seconds. Critically, it also lets you customise the scan by site type, so a content site is not penalised for missing an MCP Server Card it was never meant to have.

Once you have your score, work through this checklist before making any changes.

Discoverability

- Open your robots.txt. Are you accidentally blocking GPTBot, ClaudeBot, or PerplexityBot where you want visibility?

- Is your sitemap.xml current and submitted in Google Search Console?

- Does your sitemap include your highest-value content pages?

Content

- Do your top 10 pages have clear H1 headings, logical H2 structure, and concise opening paragraphs?

- Do key pages have Article, FAQ, or HowTo schema? Check with Google's Rich Results Test.

- Is an llms.txt file worth adding given how often you publish?

Governance

- Have you decided your AI usage stance: AI search allowed, AI training restricted, or both allowed?

- If you have added any new agent-facing URLs or files, are they handled correctly in your crawl directives?

Pick one improvement from each category. Implement it. Re-test. Then expand. Agent readiness is not a one-time sprint.

What it means for you: A 30-minute audit gives you a clear, prioritised list of what to fix. Most content sites will find two or three quick wins that take less than an hour to implement.

Frequently Asked Questions

Do I need to implement every agent-readiness standard to see results?

No. For content sites, the standards that matter are robots.txt governance, sitemap health, structured data, and optionally llms.txt or markdown delivery. Standards like API Catalogs, MCP Server Cards, OAuth discovery, and commerce protocols are designed for API-first products and transactional platforms. Implementing them on a content site adds complexity without any meaningful benefit.

Will adding llms.txt improve my rankings in AI search engines?

Not directly, and not on its own. llms.txt helps agents navigate your site more efficiently, but it does not guarantee citation or ranking. What actually drives AI citation is the quality and clarity of your content: clear headings, concise paragraphs, structured data, and answer-first formatting that lets agents extract useful information without guessing. llms.txt is a useful complement to good content, not a substitute for it.

Can implementing agent-readiness features hurt my Google rankings?

Yes, if done carelessly. The most common risks are creating new public URLs for agent-facing content without proper indexation controls, serving meaningfully different content to agents versus humans, and burning crawl budget on infrastructure that Google has to process before it reaches your real pages. Implement changes one at a time and monitor your search performance after each one.

Is markdown content negotiation the same as cloaking?

Technically no. Cloaking means serving different content based on who is requesting it (typically by user-agent). Markdown content negotiation responds to a format preference declared in the HTTP Accept header, which is a standard web mechanism used for language negotiation and image format selection. The same content is served in a different format. That said, if your markdown version omits sections or changes the substance of your HTML version, you are creating a content equivalence problem that search engines and AI systems can both flag.

How do I know if AI agents are already visiting my site?

Check your server logs or analytics for known AI crawler user-agents: GPTBot (OpenAI), ClaudeBot (Anthropic), PerplexityBot, and OAI-SearchBot are the most common. Most analytics platforms do not filter these by default, so you may need to look at raw log data. Cloudflare's dashboard also shows AI bot traffic if your site runs through their network. If you want to know how AI engines currently perceive and cite your brand specifically, Windgrove's AI visibility audit gives you a structured read on that.