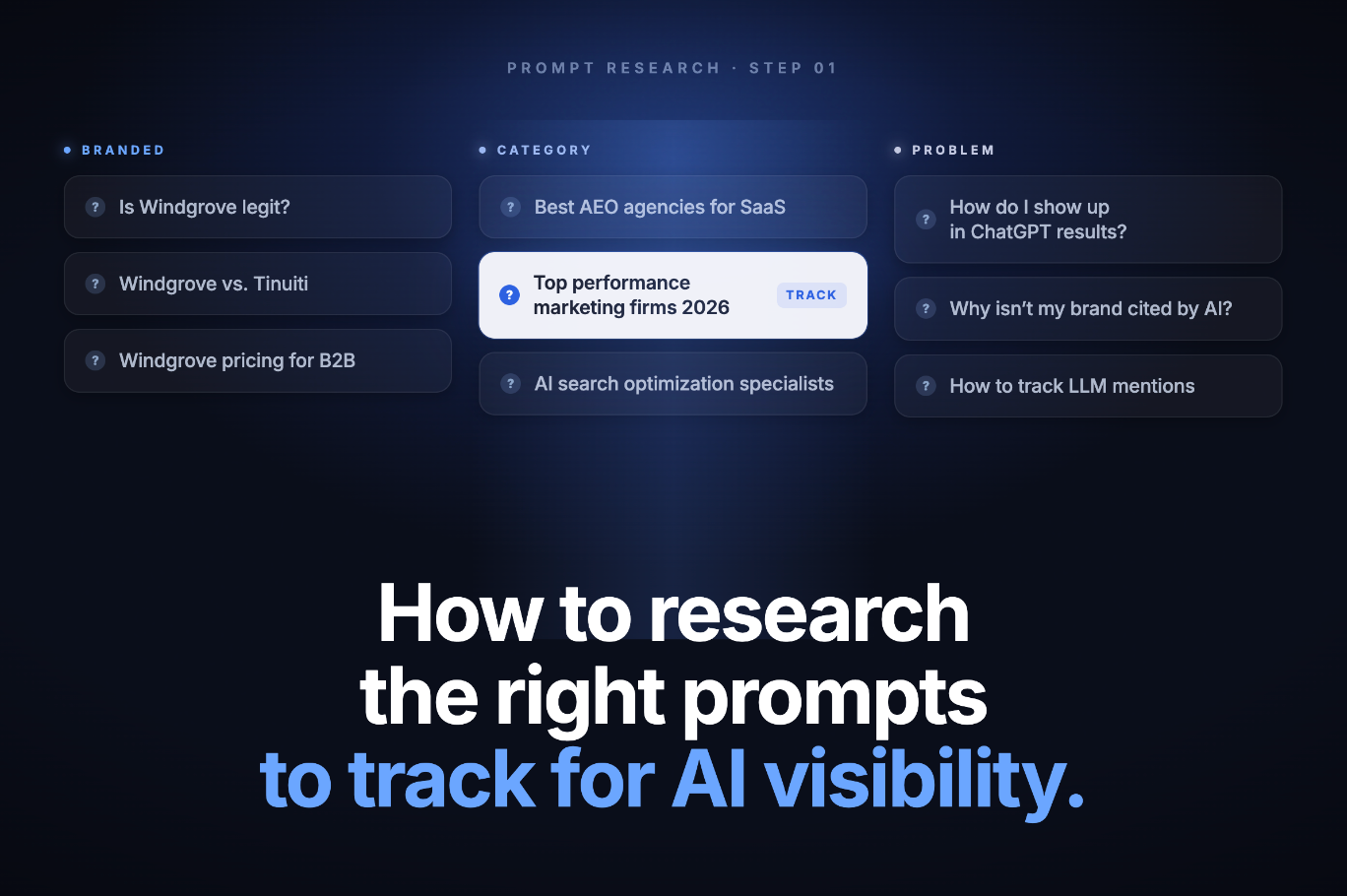

How to Research the Right Prompts to Track for AI Visibility

Executive SummaryMost brands enter AI visibility tracking by guessing prompts, which produces data that confirms brand awareness rather than measuring real buying-stage visibility. The research process has to come before the tracking setup.The most reliable prompt sets are built from four sources: existing organic search data, buyer intent mapping, competitor positioning analysis, and direct LLM interrogation, each serving a different part of the funnel.Prompts should be segmented into category-level, comparison, and brand-specific groups and tracked separately. Mixing them inflates share-of-voice numbers and obscures where you actually have, or lack, AI presence.Tracking across two or three priority AI engines (ChatGPT, Perplexity, and Google AI Mode) for a minimum of 30 days produces statistically meaningful data. A single-engine, single-run snapshot tells you almost nothing useful.

The Prompt Research Problem Nobody Talks About

Most teams set up an AI visibility tool, type in a few prompts that feel relevant, and call it a tracking strategy. The problem is not the tool. The problem is that the prompts are guesses, and guesses produce data that looks meaningful but isn't.

The shift from keyword rankings to AI citations has changed what you need to measure. More than 80% of searches now end without a click, with AI engines producing synthesised answers rather than lists of links. Your brand either appears in those answers or it doesn't, and the only way to know is to track the right prompts. Tracking the wrong ones gives you a false sense of visibility.

This article covers the exact research process used to build a prompt tracking set that reflects how real buyers actually query AI engines, not how you wish they would.

The core principle: your tracking set should mirror your buyers' decision journey, not your marketing messaging.

Step 1: Start With Your Organic Search Data

The fastest way to build a relevant prompt list is to start with what you already know: the queries that send organic traffic to your site. These are real searches from real people, and they are the closest proxy you have to the questions buyers are asking AI engines today.

Export your top non-branded keywords from Google Search Console. Filter for queries with three or more words. These long-tail phrases are closest to the conversational style of AI prompts. A query like "best logistics software for mid-size companies" is already written the way someone would phrase it to ChatGPT.

Why AI Overviews Are a Strong Signal

Within Search Console, pay attention to which queries are triggering Google AI Overviews. According to research from SE Ranking, when Google has already determined a query is AI-answer-worthy, it is a strong candidate for your prompt tracking set. The reasoning is straightforward: if Google's AI is synthesising an answer for it, ChatGPT and Perplexity almost certainly are too.

This step alone can surface 30 to 50 high-quality prompt seeds in under an hour. The goal is not to track all of them. It is to use them as the foundation for the next stage of research.

Step 2: Map Prompts to Buyer Intent Stages

Raw keyword data gives you volume. Intent mapping gives you strategy. Every prompt in your tracking set should be assigned to a stage of the buyer journey, because AI engines respond very differently to a question like "what is AEO" versus "best AEO agency for B2B companies."

The five intent stages worth mapping to are:

- Education: "What is [category]?" and "How does [solution] work?" prompts. These surface awareness-stage buyers.

- Recommendation: "Best [solution] for [use case]" and "Top [category] tools" prompts. This is where most purchase decisions begin in AI search.

- Comparison: "[Brand A] vs [Brand B]" and "alternatives to [Brand]" prompts. High-intent, late-stage queries.

- Pricing: "How much does [category] cost?" and "[solution] pricing" prompts. Buyers actively evaluating budget.

- Brand navigation: Direct queries about your brand or a competitor, such as "[Brand] reviews" or "is [Brand] legit."

Why this matters for your data: a tracking set skewed toward education prompts will show strong AI visibility but will not tell you whether you appear when buyers are actually ready to purchase. Research from Meltwater confirms that prompt phrasing alone shifts which brands an LLM surfaces. "Best project management tools for small teams" and "enterprise-grade project management software with compliance controls" pull completely different brand sets from the same model.

Aim for roughly 60% recommendation and comparison prompts, 25% education, and 15% brand-specific. This weighting reflects where AI engines have the most influence over buying decisions.

Step 3: Use Competitors to Find Prompts You Are Missing

Your competitors have already done a version of this research. Their paid search campaigns, their blog content, and their People Also Ask presence reveal the prompts they believe buyers are using. You can reverse-engineer that intelligence.

Pull competitor paid keywords using a tool like Google Ads' Auction Insights or a third-party keyword research platform. Filter for queries with three or more words and cross-reference them against your existing prompt list. Any high-intent phrase a competitor is bidding on but that you are not tracking is a gap in your AI visibility measurement.

Interrogating the LLMs Directly

One of the most underused research methods is simply asking the AI engines themselves. Run a prompt like "What questions do buyers ask when evaluating [your category]?" across ChatGPT and Perplexity. The responses surface the language patterns these models associate with your market, which is exactly the language they use when generating answers to real buyer queries.

This method is particularly useful for uncovering long-tail comparison and alternative prompts. A follow-up like "What are the most common objections buyers have about [category]?" will surface negative-intent prompts, such as "[Brand] alternatives" or "why not to use [Brand]," that most teams never think to track but that represent real buying-stage conversations happening in AI search right now.

"LLM responses depend on how the question is phrased, which model you use, where you're located, and even when you ask." — Talkwalker

This variability is precisely why prompt research cannot be a one-time exercise. The queries buyers use evolve, and so do the answers AI engines generate in response to them.

Step 4: Segment Your Prompts Before You Track Them

The single most common mistake in AI visibility tracking is mixing brand-specific prompts with category-level prompts in the same reporting group. It inflates your numbers in a way that feels good but obscures the truth.

When your brand name appears in the prompt, AI visibility is nearly guaranteed. A prompt like "[Your Brand] vs [Competitor]" will almost always produce a response that mentions you. Counting that alongside a category prompt like "best B2B marketing agencies in Canada" distorts your share-of-voice metric significantly.

The Three Tracking Groups

Organise your prompt set into three separate groups before importing them into any tracking tool:

Group | Example Prompts | What It Measures |

|---|---|---|

Category | "Best AEO agencies for B2B" | Unbranded market visibility |

Comparison | "AEO agency vs in-house SEO team" | Competitive positioning in AI answers |

Brand | "Windgrove AI reviews" / "Is [Brand] legit?" | Brand-specific AI sentiment and accuracy |

Report on each group independently. Your category visibility score tells you whether you are winning new audiences. Your brand visibility score tells you whether AI engines are describing you accurately. These are different problems with different solutions, and treating them as a single metric means you will likely solve neither.

A practical benchmark: according to industry research, a well-structured prompt set should be roughly 75% unbranded prompts. This ensures your tracking data reflects where you are winning new audiences, not just confirming that AI engines know your brand exists.

Step 5: Choose Which AI Engines to Track and For How Long

Not every AI engine is worth tracking for every business. The right selection depends on where your buyers actually search, not on which platforms generate the most press coverage.

For most B2B brands, the starting point is three engines:

- ChatGPT: the largest user base globally, with broad coverage across all buyer stages

- Perplexity: citation-heavy behaviour, meaning it surfaces sources more explicitly and is particularly relevant for brands that want to understand whether their content is being cited

- Google AI Mode and AI Overviews: tied directly to traditional search intent, making it essential for any brand that currently relies on organic search traffic

Adding more engines before you have clean data from these three creates noise, not insight. Once you have a 60-day baseline from your priority set, you can evaluate whether Gemini, Claude, or Grok surface meaningfully different results for your category.

The 30-Day Minimum Rule

Research from SE Ranking confirms that citation patterns shift between runs, and a single snapshot is statistically unreliable. LLM responses are non-deterministic: the same prompt run twice on the same day can produce different brand mentions. You need at minimum 30 days of daily runs before you can distinguish a real trend from random variation.

This is the part most teams skip. They run a prompt set once, see their brand appear in three out of five responses, and report 60% visibility. Run the same prompts daily for a month and you will likely find the real number is somewhere between 20% and 80% depending on the day, the model version, and the phrasing variation. The average across that period is your actual baseline.

What Good Tracking Data Actually Tells You

A well-researched prompt set does not just tell you whether your brand appears. It tells you where you have content gaps, which competitors AI engines prefer over you for specific intent stages, and whether the way AI engines describe your brand matches how you want to be positioned.

The two metrics that matter most right now are brand mentions and citations. A brand mention means your company name appeared in the AI-generated answer. A citation means the AI linked to or explicitly referenced your content as a source. According to Nick Gallagher, Sr. SEO Strategy Director at Conductor, citations are the higher-value signal: they indicate the AI engine trusts your content enough to surface it as evidence, not just name-drop your brand.

When your tracking data shows strong brand mentions but weak citations, the problem is usually content structure. The AI knows you exist but does not trust your content enough to use it as a source. When your data shows weak brand mentions on category-level prompts, the problem is authority: the AI does not associate your brand with the category at the level needed to surface you in competitive answers.

Both are fixable. But you can only diagnose the difference if your prompt set was built with the research process described above. Guessed prompts produce data that cannot tell you which problem you actually have.

The real value of prompt research is not the list itself. It is the clarity it gives you about where your AI visibility gaps are, and therefore where to focus your content and authority-building efforts next.

Frequently Asked Questions

How many prompts should I track? A starting set of 30 to 60 prompts is practical for most brands. Large enough for statistically meaningful data across intent stages, small enough to manage without overwhelming your reporting. Running 500 prompts from day one without a research foundation produces a lot of data and very little insight.

Should I track branded or unbranded prompts? Both, but separately. Unbranded prompts should make up roughly 75% of your set because they measure visibility with buyers who are not already looking for you. Branded prompts are valuable for monitoring AI sentiment and detecting outdated information. Mixing the two groups in a single share-of-voice metric inflates your numbers and hides the gaps that matter.

How often do I need to update my prompt list? Review your prompt set every 60 to 90 days. Buyer language evolves, new competitors enter the market, and AI engines update their training data. The research process described in this article should be repeated on a quarterly cadence, not treated as a one-time setup task.

Does prompt research work differently for different industries? The methodology is consistent, but the output varies by industry. B2B software companies will find most of their high-value prompts in recommendation and comparison stages. Professional services firms often have more value in education-stage prompts, where AI engines explain categories and buyers are forming their initial understanding of the market.

What is the difference between AEO, GEO, and LLMO? All three terms describe the same fundamental goal: optimising your brand's presence in AI-generated answers. AEO focuses on appearing in direct answer interfaces like ChatGPT and Perplexity. GEO emphasises the content and structural signals that cause AI engines to cite your pages. LLMO is a broader umbrella covering both. The prompt research methodology in this article applies equally across all three frameworks.?