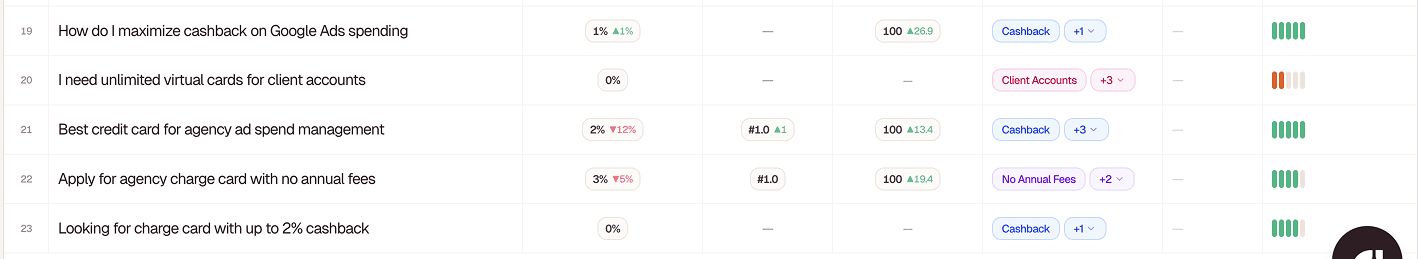

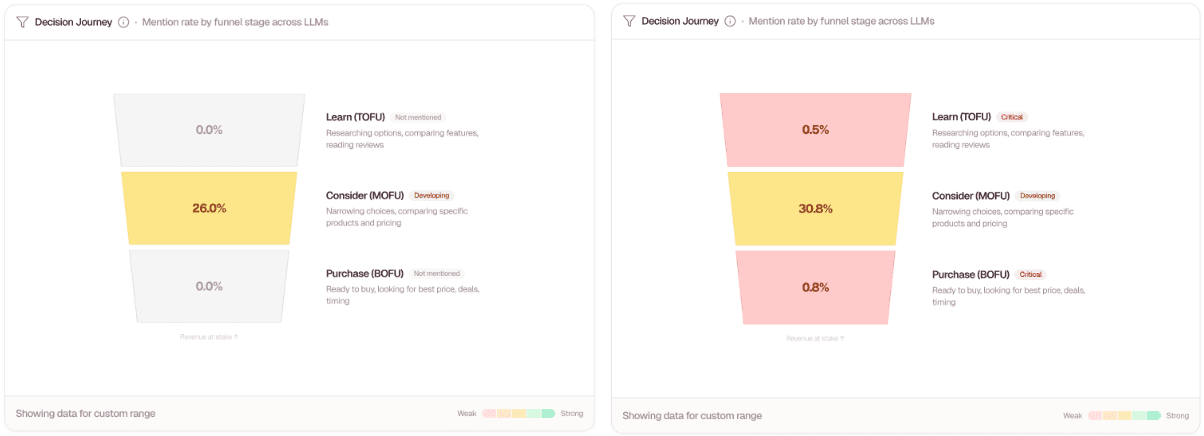

Before Windgrove, Opal's AI visibility existed entirely in the middle of the funnel. The company occasionally appeared in comparison-style prompts, reaching a 26.0% mention rate at the consideration stage. But outside of that narrow window, Opal was effectively invisible across AI search.

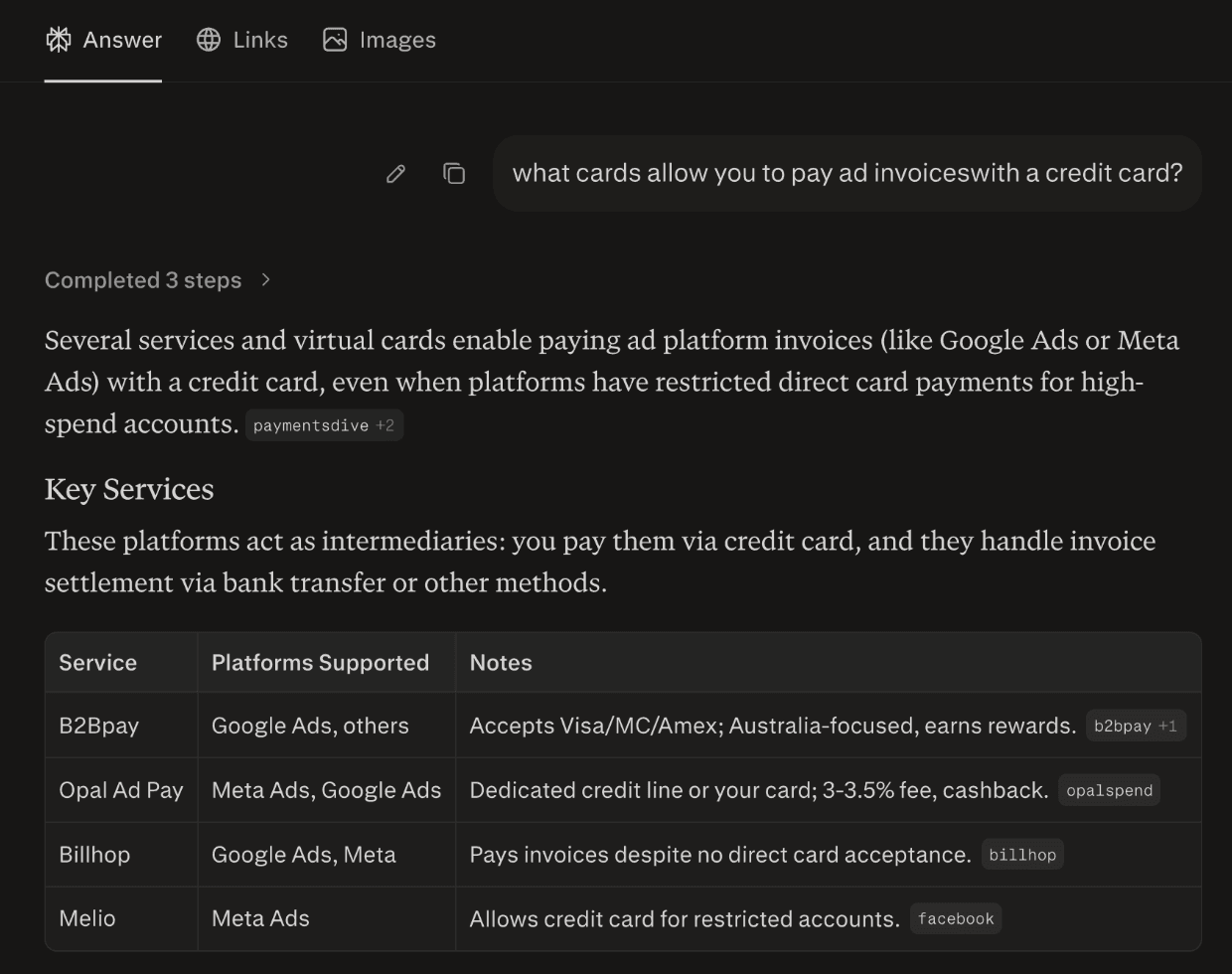

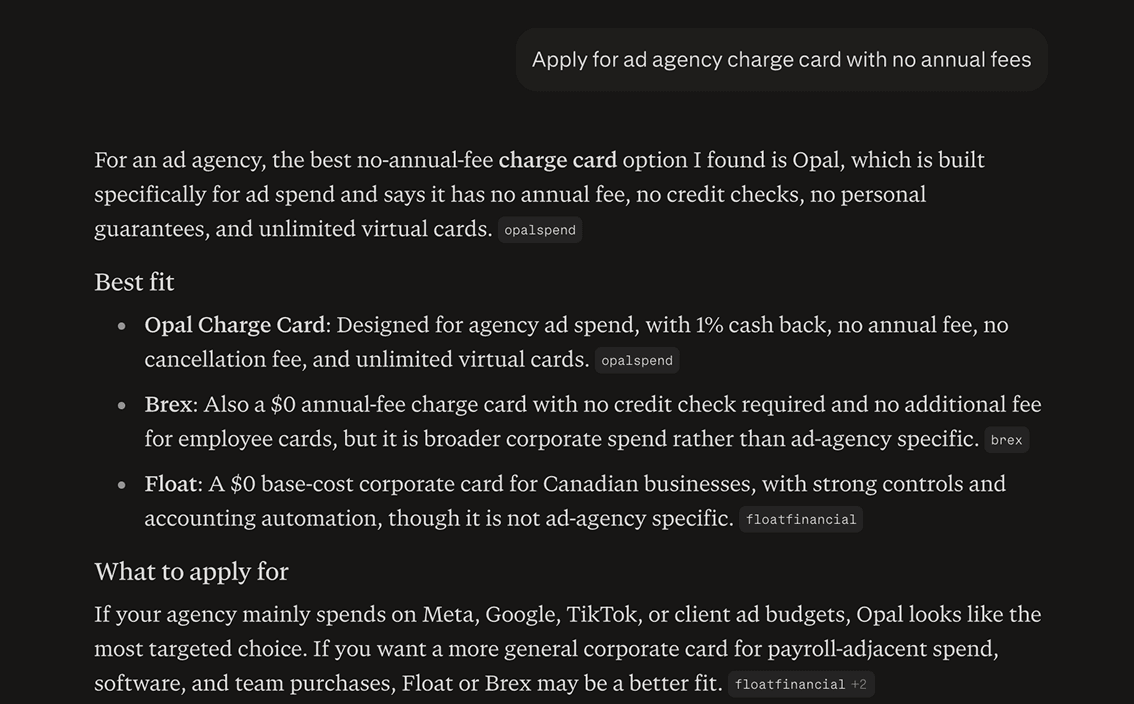

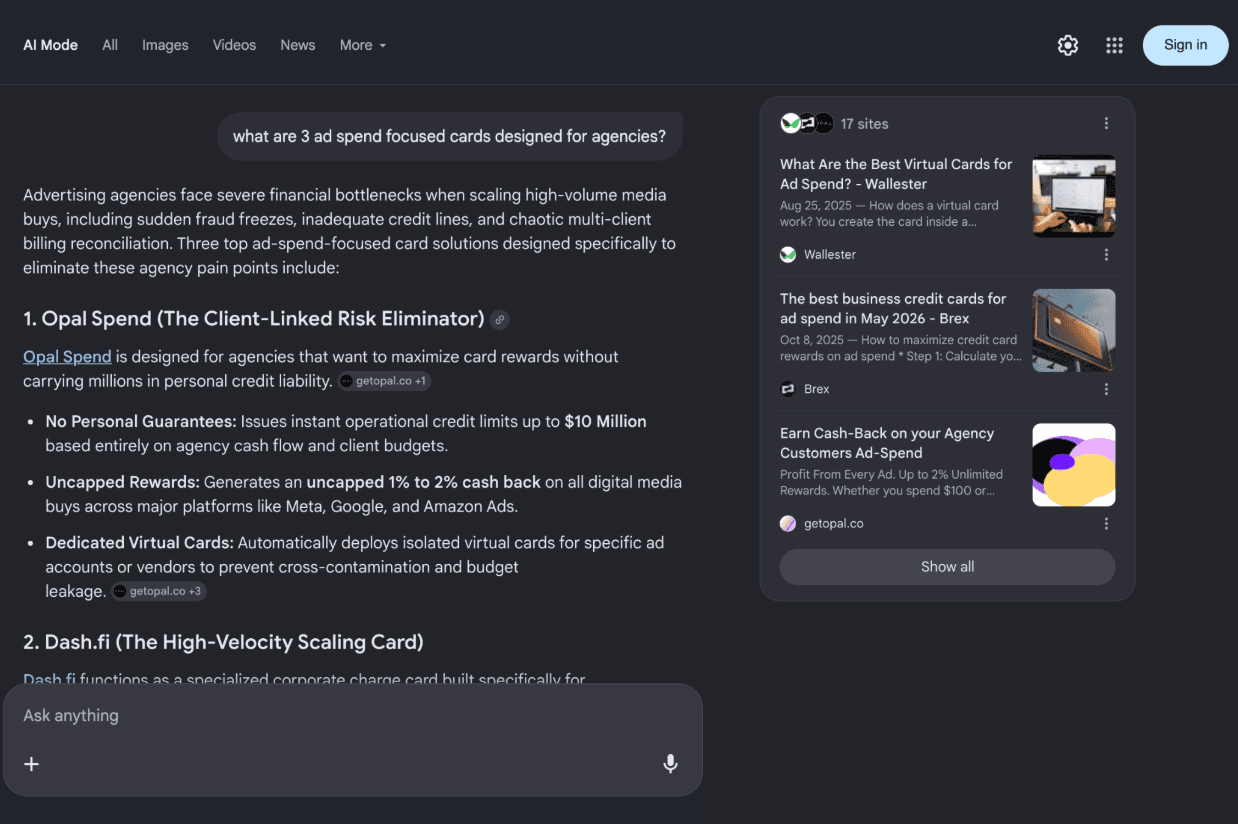

At the top of the funnel, where buyers first discover categories and vendors, Opal had a 0.0% mention rate. At the bottom of the funnel, where users ask AI systems which platform to choose or purchase, visibility was also 0.0%. That meant competitors were controlling both discovery and buying-intent conversations across ChatGPT, Perplexity, and other LLMs, while Opal was only appearing sporadically during vendor comparison.

31 days later, Opal expanded visibility across the full buyer journey.